Data plays a critical part in the operating efficiencies of the modern business environment. Having updated reports and data in hand helps in making cutting-edge decisions that drive enterprises ahead of the competition. It is therefore not surprising that organizations are opting for optimized data management systems and tools and replacing existing database platforms with the more latest and advanced ones that reduce the workload of database administrators. One route that is frequently taken now is migrating database Microsoft SQL Server by making optimized use of Snowflake Data Lake.

Before diving into the process of migration, a quick overview of each of them will be in order.

Microsoft SQL Server

The Microsoft database management ecosystem has been one of the leaders in this field because of its many benefits. It is a Relational Database Management System (RDMS) and supports Microsoft’s .NET framework out of the box. SQL Server supports a local area network or the web on a single machine and has long been the foundation of business analytics and transaction processing in most IT and corporate structures. Microsoft SQL Server, along with IBM’s DB2 and Oracle database are considered to be among the top three database technologies. It is built on the programming language SQL that is commonly used by database administrators to query data in it.

Data Lake

Data Lake is a centralized storehouse where data of any volume both structured and unstructured can be stored. This data can then be utilized to run analytics, dashboards, big data visualizations, and more. A data lake enables organizations to run real-time analytics and make cutting-edge decisions to get ahead of the competition. Some of the latest and innovative analytics that can be deployed over Data Lake are from machine learning, social media, log files, and Internet-connected devices.

Snowflake Data Lake provides the most optimized solutions that facilitate and improve any data lake strategy. The advantage is that being entirely a cloud-based architecture, it is very adaptable to the specific needs of any organization. Components of data lake design patterns can be mixed and matched to get the best out of Snowflake Data Lake.

Snowflake

An analysis of Snowflake shows that it is ideal for database management.

One of the comparatively new entrants to database management systems is Snowflake, a database warehousing solution that is based entirely in the cloud.

There are several benefits of Snowflake.

- Multiple points to access workloads – Multiple workgroups can work simultaneously on multiple workloads as the same data is available to all. In the process, there is no degradation in the performance of Snowflake.

- High performing database – Snowflake offers separate computing and storage facilities. Users can work in either of them, scale up or down as per requirements and pay only for the quantum of resources used.

- Support to multiple cloud vendors – The Snowflake architecture provides support to multiple cloud vendors and the list is getting longer by the day. Users can, therefore, use the same set of tools to work on different cloud vendors.

- Migrating various data types – A very important feature of Snowflake is that both structured and unstructured data can be loaded into its database. Data types supported include JSON, Avro, XML, and Parquet data among others.

- Optimized support – Snowflake automatically clusters data and no indexes have to be defined. For very large tables, clustering keys may be used to co-locate table data.

- Unlimited storage and computing – Being cloud-based, Snowflake provides unlimited storage and computing facilities with a high degree of flexibility. Organizations can use them as per requirements, and make use of extra facilities whenever necessary without investing in additional hardware and software infrastructure.

With all these advantages, more and more businesses are opting to migrate databases from SQL Server to get the maximum out of Snowflake Data Lake.

Migrating databases from SQL Server to Snowflake

The process of migration involves certain key steps.

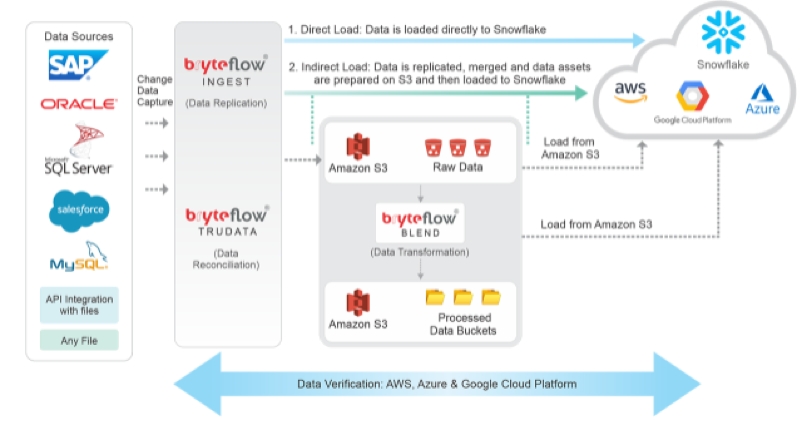

- Mining data from SQL Server – Extracting data from the SQL Server database is the first step which is most commonly done through queries for extraction. The data can be filtered and sorted through Select Statements. The Microsoft SQL Server Management Studio is used to export bulk data or entire databases in text or CSV format.

- Preparing data for migration – The data extracted cannot be loaded directly to Snowflake and has to be first processed and prepared to match the data types that are supported by Snowflake. After the data is mined, it has to be checked on this issue. However, it is not necessary to specify a schema beforehand while migrating JSON or XML data into Snowflake.

- Staging the data files – The processed data files too cannot be migrated to Snowflake directly but have to be kept in a temporary location. This is known as the staging of the data files. There are two components here – internal and external stages. The internal stage is created with SQL statements by the user and a file format and name are assigned to the stage. This also makes the SQL ServertoSnowflakemigration process easier and more flexible.For data to be kept in an external stage, Snowflake currently supports Amazon S3 and Microsoft Azure.

- Loading data to Snowflake – The data is finally ready for migration into Snowflake. The data loading wizard in the Data Loading Overview of Snowflake is applied for smaller databases while the COPY command is used to migrate data from an internal or external stage.

Finally, it is preferable to build a script that continually recognizes fresh data in the source database and uses an auto-incrementing field to continually update changed data only. If this is not done, organizations would have to opt for complete data refreshes whenever any changes are made at the source. This will be a waste of time and resources.

Most of the processes to migrate databases from SQL Server is best done to Snowflake Data Lakewith specialized tools that are automated and can be operated with just a click and point interface.

Laila Azzahra is a professional writer and blogger that loves to write about technology, business, entertainment, science, and health.